OpenI’s Open -Source Model: GPT – OSS on Azure AI Foundry and Windows AI Foundry | Blog Microsoft Azure

With the launch of the OPENAI GPT-OS-Ist Open-Weight Release Sale GPT-2-2-2-OS-Ist Open-Weight Sale GPT-2-2-2-WEAGE and Enterprises, Onceii’s unprecedented capabilities are unprecedented and deployed under their conditions.

AI is not the length of the layer in the tank – becomes a tank. This new era requires tools that are open, adaptable and ready to start where ideas live -from the cloud to the edge, from the first experiment to modified deployment. At Microsoft, we create an application and factory for the Full Stack agent, which seizes not only to use every developer but also to create with it.

This is the vision of our platform AI spanning cloud to edge. Azure AI Foundry Provides a unified platform for building, fine -tuning and intelligent deployment of agents with confidence Local foundry Open-source models for edge-flexible allowing invizions on billions of devices. Foundry Windows AI It builds on this foundation and integrates the Local Intowows 11 foundry to ensure support, low latency of the local developmental cycle AI deeply confused with the Windows platform.

Start OpenI’s GPT – OS Models-Noun you just remove open weight, because GPT-2-gives developers and business unprecedented ability to operate, adapt and deploy the Openai models entirely according to their own conditions.

For the first time you can run the OpenAi models as GPT – OSS – 12b We have a single enterprise GPU – or run GPT -oss– 20b Locally. It is remarkable that it is a undressing replica-they are fast, capable and designed with regard to the real world deployment: justification on the cloud scale or agent tasks on the edge.

And because they have an open weight, these models are also easy to tune, distill and optimize. Whether you adapt to the domain’s copilot, you compress offline or prototypize locally before scaling in production, Azure AI Foundry and Local foundry Give you tools for all this – safely, efficiently and without compromise.

Open models, real momentum

The open models moved from the edges to the mainstream. Today they drive everything from the auto -agent agents to the domain copilots and redefine how AI builds and deployed. And with Foundry Azure AI we give you infrastructure to move with this momentum:

- Teams with open weights can fine-tune using parameters-ethods (Lora, Qlora, PFT), haircut in proportion and overwhelms without weeks.

- You can distill or quantify models, trim the context length, or apply structured sparsite to hit strict memory envelopes for marginal GPUs and even top notebooks.

- Access to full weight can also check patterns for safety audits, domain injection, specific layers of retina or export to ONNX/Triton for containerized inference at Azure Kubernetes Service (AKS) or Foundry Local.

In short, open models are not only alternates of function-paaris-programmable substrates. And Azure AI Foundry provided training pipes, weight management and low latency serving backplane, so you can use each of these levers and push AI adaptation envelope.

Meet GPT – OSS: Two Models, Endless option

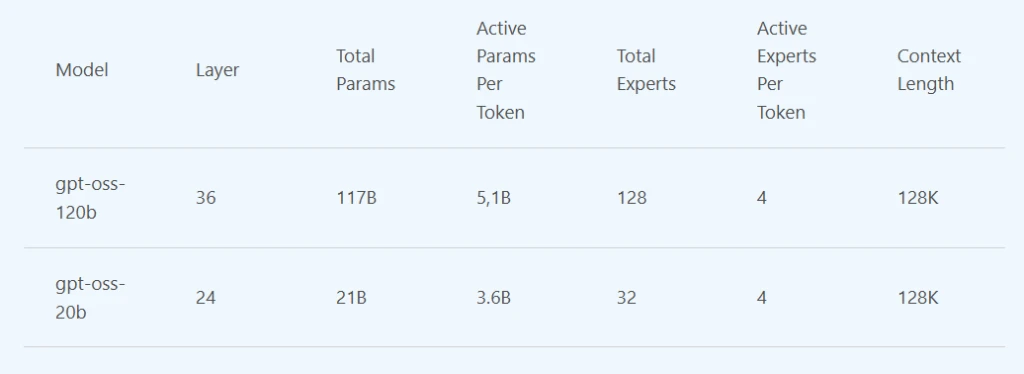

TODAY, GPT-OS-150B and GPT-OS-20B We are available to Azure AI foundry. GPT-OS-20B is also available on Windows AI Foundry and will soon come to MacOS via Foundry Local. What do you optimize Sovereignty, Power, Portability of GoldThese models unlock a new level of control.

- GPT -oss-120b is the reasons for Powerhouse. With 120 billion parameters and architectural sparshism, O4-mini performance is made for a fraction of the size, excels in complex tasks such as mathematics, code, and Q & A-and-like to run on a single data center class. Ideal for safe and high -performance deployment where latency or cost matter is.

- GPT -oss-20b is proficient and lightweight. Optimized for agency tasks, such as code and use of tools, run efficiently on the Windows hardware, including discrete GPUs with 16GB+ VRAM, with other devices soon. It is ideal for building assistants or inserting AI into the real world workflows, even in the bandwidth.

Both models will soon be compatible with the API with the now ubiquitous API. This means that you can confuse them in existing applications with minimal changes – maximum flexibility.

GPT – OOS to the cloud and edge

Azure AI Foundry is more than a model catalog – it is a platform for builders. With more than 11,000 models and growth, it gives developers unified space for evaluation, fine -tuning and models with closing and enterprise levels.

Today with GPT – OSS in the catalog:

- Dial the inference endpoints using GPT – OSS in the cloud with several clots.

- Tune and distill the models using your own data and deployment with certainty.

- Mix open and proprietary models to meet the needs specific to the task.

For organizations developing scenarios only possible on client facilities, Local foundryProminent open-source models forFoundry Windows AIPreliminary optimized to derive your own hardware, CPU support, GPU and NPU, through simple CLI, API and SDK.

You work in offline settings, build on a secure network, or run on Edge-Foundry Local and Windows AI Foundry allows you to fully cloud-option. With the ability to use GPT-OS-20B on modern high-performance Windows, your data stays where you want to-ace model strength comes to you.

This is a hybrid AI in action: the ability to mix and compare models, optimize performance and cost, and meet your data where it lives.

Strengthening builders and creators of decisions

The availability of GPT – OOS on Azure and Windows unlocks strong new possibilities for builders and leaders.

They mean open weights full of transparency for developers. Check the model, customize, gentle and deployment under your own conditions. With GPT -oss you can create with certainty, underestimate exactly how your model works and how to improve it for your use.

The creators of the decision include control and flexibility. For GPT-OSS you get competing performance-black boxes, fewer compromises and more deployment options, compliance and costs.

Vision for the Future: Open and Responsible AI, Together

GPT – OOS and its integration into Azure and Windows is part of a larger story. We call on the future where AI is ubiquitous – and we are determined to be an open platform that will bring these innovative technologies to our customers in all our data centers and devices.

We offer GPT – OOS through various entry points, double the obligation to democratize AI. We realize that our customers will have a proprietary and open portfolio – and we support what to unlock value for you. When you work with open source code or profiles models, they include built -in security and security tools Foundry Management, Compliance and Customers of Credustration can innovate confidently across all types of models.

Our GPT-OS support is the latest in our commitment to open tools and standards. In June we announced that the chat github co -extension is now open source on Githubu under the license MIT – our first step to makeVs code and open source AI editor . We are trying to speed up innovation with an open source code and increase the value for our market leader on the market. This is how it looks when research, product and platform connect. The breakthroughs that we have allowed with our cloud in OpenI are now open tools on which anyone can build – and Azure is a bridge that revives them.

Next steps and sources for navigation GPT – OSS

- Deploy GPT -oss in the cloud todayWith several CLI commands using the Azure AI foundry. Browse the Azure AI catalog to spin the end point.

- Today’s GPT-OSS-20B deployment on your Windows device today (And soon to macos) through the local foundry. Follow the QuickStart guide and learn more.

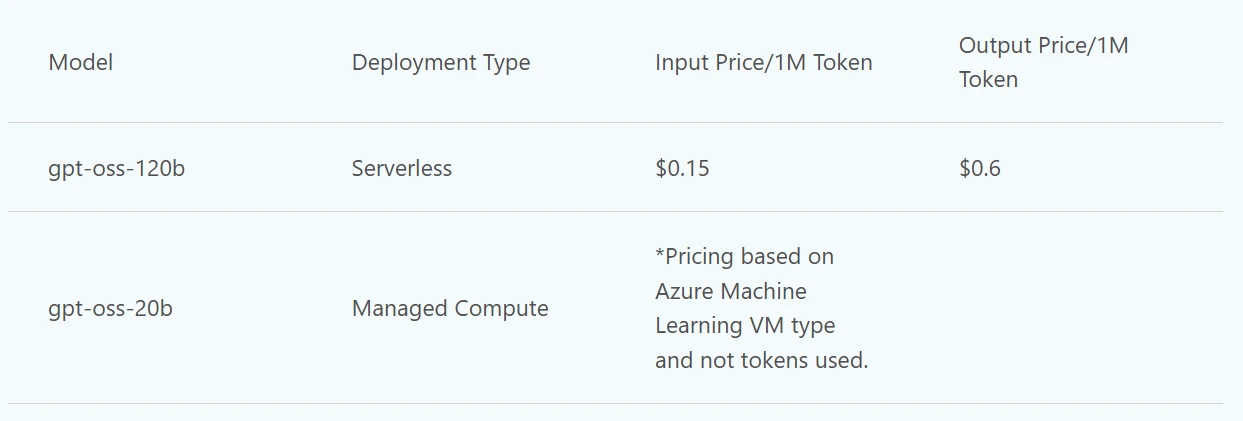

- Prices1 For these models is as follows:

*See page managed here.

1Prices are accurate since August 2025.

(Tagstranslate) ai